X enthusiast and Reddit shareholder Sam Altman had an unusual realization this week: social media is starting to feel less human. On Monday, Altman posted that bots have made it nearly impossible to tell whether posts are written by real people.

The thought struck him while browsing the r/Claudecode subreddit, a community originally focused on Anthropic’s Claude Code. Lately, it has been filled with posts from supposed “former Claude users” celebrating their move to OpenAI Codex, a competing programming assistant launched in May. The subreddit became so flooded with these testimonials that one Redditor quipped:

“Is it possible to switch to Codex without posting about it here first?”

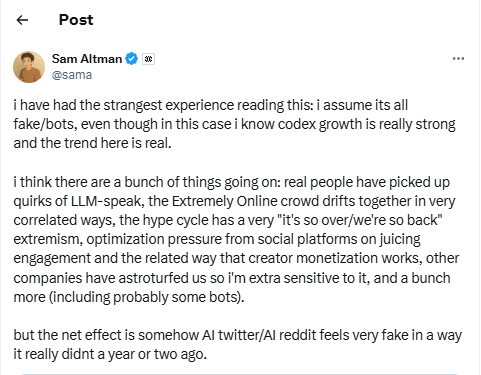

That prompted Altman to wonder, how many of these posts were genuine? “I have had the strangest experience reading this,” he admitted on X. “I assume it’s all fake/bots, even though I know Codex growth is real and robust.”

Why Everything Feels “Bot-Like”

Altman went further, analyzing why social media now feels eerily fake:

-

Real people have picked up the quirks of LLM-speak, imitating how AI writes.

-

Online fandoms often move together in highly predictable, correlated ways.

-

The hype cycle swings between extremes of “it’s so over” and “we’re so back.”

-

Platforms optimize for engagement metrics, incentivizing repetitive, polarized posting.

-

Monetization structures encourage creators to churn out more content, faster.

-

And yes—there are probably actual bots in the mix.

Ironically, humans are now accused of sounding too much like the AIs that were built to mimic them. OpenAI’s own models were trained heavily on Reddit data, where Altman once sat on the board and remains a shareholder.

Read More: Are Chatbots Making Us Mentally Lazy and Stupid?

Astroturfing, Bots, and Online Trust

Altman also raised the specter of astroturfing—fake posts paid for by companies or contractors to make products look more popular. He admitted this possibility made him extra cautious about reading pro-OpenAI posts.

Although there’s no hard evidence of astroturfing in this case, Reddit has been volatile. After the release of GPT-5.0, user sentiment shifted dramatically. Instead of the usual praise, threads filled with frustration: complaints about personality quirks, wasted credits, and buggy rollouts. Altman himself addressed these issues in a Reddit AMA, promising fixes. Still, the subreddit has not regained its earlier enthusiasm.

Is Social Media Too Fake to Trust?

Altman summed up his worry simply: “AI Twitter/AI Reddit feels very fake in a way it didn’t a year or two ago.”

This is not just paranoia. Imperva, a cybersecurity firm, reported that in 2024, over 50% of global internet traffic came from non-human sources, largely AI-driven. Even X’s own Grok bot admitted to estimates of hundreds of millions of bots on the platform.

That raises uncomfortable questions: If much of the content online isn’t human, can communities still function the way they used to? And if bots can form echo chambers—like researchers at the University of Amsterdam found when they created a bot-only social network—does it even matter if humans are involved?

A Hint at OpenAI’s Next Move?

Some observers believe Altman’s musings weren’t just random. In April, The Verge reported that OpenAI is quietly exploring its own social media platform, potentially to rival X or Facebook. Could Altman’s “everything feels fake” moment be laying the groundwork for a future launch?

Whether or not OpenAI is building its own network, Altman is tapping into a real anxiety. Social media has always had bots—but with the rise of LLMs, distinguishing authentic voices from synthetic ones is harder than ever.

Read More: Study Raises Alarms on AI Chatbots Giving Suicide Advice

FAQs

1. What did Sam Altman say about bots on social media?

Altman said bots have made it nearly impossible to know if social media posts are written by real people, especially in AI-related subreddits.

2. Why does social media feel “fake” now?

Altman believes it’s a mix of real people adopting AI-like writing styles, engagement-driven algorithms, monetization pressures, and actual bots.

3. What is astroturfing, and why did Altman mention it?

Astroturfing is when companies (or contractors) post fake content to make a product look popular. Altman suggested this may explain suspiciously uniform posts about Codex.

4. How big is the bot problem on the internet?

Reports suggest more than 50% of all web traffic in 2024 came from non-human sources, with hundreds of millions of bots active on X alone.

5. Is OpenAI planning to launch a social media platform?

There are rumors that OpenAI is developing a network to rival X or Facebook, though Altman hasn’t confirmed it. His recent comments may hint at such a move.